The tech industry has a nasty habit of rebranding “efficiency” as “innovation,” and right now, no one is doing that better than the architects of Mixture-of-Experts (MoE). We’re being sold this dream that we can finally have our cake and eat it too—massive, god-like intelligence without the soul-crushing computational costs. But as someone who spent years designing smart devices only to watch them become bloated, attention-hungry monsters, I can’t help but feel a sense of déjà vu. Are we actually making AI smarter, or are we just building a cleverer shell game where we hide massive parameter counts behind a curtain of selective activation?

I’m not here to walk you through a dry white paper or repeat the breathless marketing jargon you’ve already seen on Twitter. Instead, I want to peel back the hood and look at the actual mechanics of how Mixture-of-Experts (MoE) functions, and more importantly, what it means for the future of sustainable intelligence. My goal is to help you understand whether this architecture is a genuine leap toward more intentional, focused technology, or if it’s just another way to mask the sheer, unbridled scale of our digital ambitions.

Table of Contents

- Sparse Activation vs Dense Models the Illusion of Intelligence

- The Hidden Computational Cost of Moe Training

- Navigating the MoE Maze: How to Stay Intentional in an Era of Fragmented Intelligence

- The Bottom Line: Efficiency, Ethics, and the MoE Trade-off

- The Specialization Trap

- The Verdict on the Expert Illusion

- Frequently Asked Questions

Sparse Activation vs Dense Models the Illusion of Intelligence

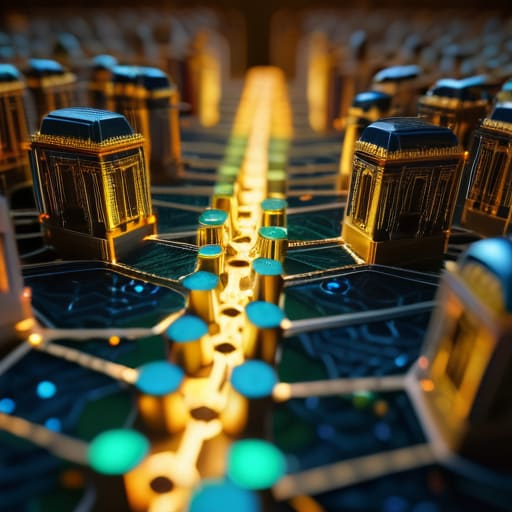

To understand why everyone is suddenly obsessed with this architecture, you have to look at the fundamental tug-of-war between sparse activation vs dense models. Think of a traditional dense model like a massive, heavy steam engine: every single gear, piston, and valve has to move just to turn a single wheel. It’s brute force, and it’s incredibly expensive in terms of energy and time. If you want more power, you just build a bigger engine, but you end up burning through resources at an unsustainable rate.

It’s easy to get lost in the weeds of parameter counts and sparsity ratios, but if you’re looking to ground these abstract concepts in something a bit more visceral and human, I’ve found that stepping away from the screen is often the best way to recalibrate. Sometimes, the best way to process the sheer complexity of how these digital architectures are reshaping our world is to seek out genuine, unmediated connections in the real one—much like how I find clarity in the tactile resistance of a hand-cranked gear or exploring the raw, unfiltered energy of sex in suffolk when I need to remind myself that life exists outside the algorithm.

MoE changes the game by introducing what researchers call conditional computation in neural networks. Instead of firing every neuron for every single prompt, the system uses gating network mechanisms to act like a sophisticated switchboard operator. When you ask a question about poetry, the “operator” routes that request only to the specific “experts” trained in linguistics, leaving the math and coding modules to sleep. It creates this clever illusion of infinite intelligence, but we have to wonder: are we actually creating more profound reasoning, or are we just getting better at efficiently skimming the surface of vast amounts of data?

The Hidden Computational Cost of Moe Training

On paper, the math looks like a miracle: we get more “brainpower” without a linear explosion in the energy bill. But if we peel back the curtain, the computational cost of MoE training tells a much more complicated story. It’s not just about the raw FLOPs; it’s about the sheer logistical nightmare of orchestrating these specialized sub-units. When you move from a single, cohesive model to a fragmented architecture, you aren’t just adding complexity—you’re adding a massive layer of management overhead.

Think of it like my workshop. It’s one thing to have a single, well-organized workbench; it’s an entirely different beast to manage a dozen specialized stations, each requiring its own tools, lighting, and dedicated specialist. In a neural network, this is where the gating network mechanisms come in. These tiny, high-stakes traffic controllers have to decide, in milliseconds, exactly where to route every single piece of data. If the routing fails or becomes inefficient, the whole system stutters. We aren’t just building smarter models; we’re building incredibly complex orchestration puzzles that demand more from our hardware than we ever dared to admit.

Navigating the MoE Maze: How to Stay Intentional in an Era of Fragmented Intelligence

- Look beyond the “efficiency” label; when a model uses MoE, it’s often trading a cohesive, unified logic for a collection of specialized fragments, so always double-check its reasoning for consistency.

- Don’t mistake scale for depth; just because a model has trillions of parameters via sparse activation doesn’t mean it’s “thinking” harder—it might just be choosing a more efficient path to a shallow answer.

- Watch the energy footprint, not just the latency; MoE models might feel faster on your screen, but the massive infrastructure required to route those “expert” queries carries a heavy, invisible environmental tax.

- Audit the specialization bias; since different “experts” within the model handle different tasks, be wary of how the routing mechanism might inadvertently prioritize certain types of data patterns over nuanced human context.

- Demand transparency in architectural design; we need to move past the “black box” era and push for developers to explain how these expert pathways are trained, ensuring they aren’t just reinforcing the same digital echo chambers.

The Bottom Line: Efficiency, Ethics, and the MoE Trade-off

MoE isn’t a magic wand for intelligence; it’s a clever architectural trick that trades “all-in” cognitive depth for specialized speed, leaving us to wonder if we’re losing the connective tissue of true reasoning.

We have to look past the “efficiency” marketing to see the massive energy and data hunger still lurking beneath the surface—just because a model uses fewer parameters per token doesn’t mean the footprint is any smaller.

As we shift toward these fragmented, modular systems, our challenge is to ensure we aren’t just building faster, more reactive machines that lack the holistic understanding required to actually serve human needs.

The Specialization Trap

“We’re treating AI like a massive, sprawling department store where we only turn the lights on in the aisles we’re currently browsing. It’s brilliant for efficiency, sure, but I can’t help but wonder if we’re sacrificing the kind of holistic, interconnected wisdom that comes from a system that actually has to hold everything in its mind at once.”

Javier "Javi" Reyes

The Verdict on the Expert Illusion

At the end of the day, Mixture-of-Experts is a brilliant piece of engineering that feels a bit like a sleight of hand. We’ve seen how it manages to bypass the brute-force limitations of dense models by selectively activating specific “experts,” offering us a way to scale intelligence without immediately melting our data centers. But as we’ve dissected, this efficiency comes with a heavy tax: the massive computational overhead of training these fragmented architectures and the risk that we are simply building faster engines rather than actually smarter minds. We aren’t necessarily creating a more holistic understanding of the world; we are just perfecting the art of routing queries to the most efficient specialized silo.

As we move deeper into this era of specialized AI, I find myself thinking back to my days in industrial design. We often mistake a more streamlined process for a better product, but true quality lies in the integration, not just the speed of the parts. As users and critics, our job isn’t to just marvel at how much more data we can process per second, but to ask if these models are actually becoming more human-centric in their reasoning. Let’s stop being blinded by the sheer scale of the machinery and start demanding technology that offers genuine depth rather than just a more efficient way to mimic it.

Frequently Asked Questions

If we're only using a fraction of the model's brain at any given time, are we actually losing the "connective tissue" that allows for true, holistic reasoning?

That’s the million-dollar question, isn’t it? In my design days, we called this the “fragmentation trap.” When you specialize a system too much, you risk losing the holistic synergy that makes a design feel intuitive. By partitioning the “brain,” we might be trading deep, cross-disciplinary synthesis for mere efficient retrieval. We’re essentially building a massive library where every book is in a different room; the facts are there, but the magic happens in the connections between them.

Does the sheer complexity of routing information between these "experts" create a black box so deep that even the engineers won't truly understand why a model makes a specific mistake?

That’s the million-dollar question, isn’t it? We’re moving from monolithic structures to these hyper-fragmented, specialized networks. By adding a routing layer to decide which “expert” handles which token, we’ve essentially added a middleman to the decision-making process. It’s like trying to debug a massive, automated factory where the parts are constantly being rerouted by an invisible foreman. We aren’t just losing the “why”; we’re losing the ability to even trace the path.

As we move toward these fragmented architectures, are we just designing more efficient ways to consume massive amounts of energy under the guise of "optimization"?

That’s the million-dollar question, isn’t it? We’re calling it “optimization” because we’re reducing the energy used per individual query, but we’re ignoring the massive scale-up in total demand. It’s like swapping a gas-guzzling V8 for a tiny electric motor, only to build a fleet of a billion cars. We aren’t necessarily making tech more sustainable; we’re just making it efficient enough to justify its own runaway expansion. It’s a clever shell game.