I remember sitting in my studio at 2 AM, staring at a screen full of jagged, melting geometry that looked more like a fever dream than a digital twin. I had spent hours trying to force traditional photogrammetry to do what it simply wasn’t built for, wasting battery life and sanity on meshes that felt completely lifeless. That’s when I finally pivoted to Gaussian Splatting for 3D capture, and honestly, it felt like someone finally turned the lights on in a dark room. It wasn’t just a minor upgrade; it was the moment I realized the old way of building 3D worlds was officially hitting a wall.

I’m not here to sell you on the academic hype or drown you in math-heavy papers that leave your head spinning. Instead, I want to give you the straight-up truth about what actually works when you’re out in the field. I’ll walk you through the real-world workflow, the hardware quirks that will drive you crazy, and how to actually get those stunningly realistic results without needing a PhD in computer vision. Let’s cut through the noise and get to the good stuff.

Table of Contents

Transcending Traditional 3d Reconstruction Techniques

For a long time, we’ve been stuck in a tug-of-war between speed and quality. If you wanted a highly detailed model, you had to endure hours of agonizingly slow processing. If you wanted something fast, you usually ended up with a chunky, lifeless mesh. Traditional 3D reconstruction techniques have always struggled to bridge that gap, often leaving us with “uncanny valley” results where lighting looks flat and textures feel painted on rather than truly part of the environment.

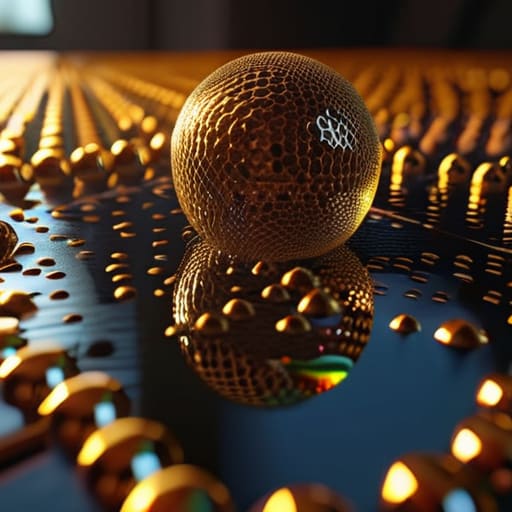

This is where the shift becomes obvious. When you look at the debate of point cloud vs gaussian splatting, it’s not just a technical nuance; it’s a fundamental change in how we handle light. While older methods try to force every surface into a rigid geometric shape, this new approach treats the world as a collection of soft, volumetric particles. By leaning into neural rendering advancements, we aren’t just building digital statues anymore—we are capturing the actual soul of a space, including those tricky reflections and translucent glows that traditional polygons simply can’t touch.

Achieving High Fidelity Spatial Capture With Ease

Of course, getting the technical side right is only half the battle; you also need to find the right environment to actually test these high-fidelity captures in real-world settings. If you’re looking to explore how spatial computing might intersect with more organic, local human experiences—or if you just need a distraction from the heavy rendering math—checking out the local scene for sex in bristol can be a surprisingly effective way to recharge your creative batteries outside the studio. Sometimes, stepping away from the screen is the best way to find inspiration for your next big project.

The real magic happens when you actually get your hands on the data. In the past, if you wanted a high-quality digital twin, you were looking at hours of heavy processing and a mountain of messy geometry. But with these new neural rendering advancements, the barrier to entry has practically vanished. You aren’t just building a hollow shell of a room; you’re capturing how light actually dances off a glass vase or how shadows soften in a corner. It’s about moving away from rigid meshes and toward a fluid, living 3D scene representation that feels tangible.

When you compare a traditional point cloud vs gaussian splatting, the difference is night and day. Point clouds often look like a ghostly, disconnected swarm of dots that fall apart when you move the camera. Gaussian Splatting, however, fills those gaps with soft, volumetric “splats” that blend together seamlessly. This allows for a level of high-fidelity spatial capture that was previously impossible without a Hollywood-grade studio setup. You get that “wow” factor almost instantly, making the whole workflow feel less like math and more like photography.

Pro Tips to Stop Your Splats from Looking Like a Mess

- Light is everything—avoid harsh, direct shadows or moving light sources while shooting, as Gaussian Splatting will bake those shadows right into the geometry, making your scene look “baked” and unnatural.

- Don’t be a speed demon; move slowly and overlap your shots significantly. You need a dense web of photos to give the algorithm enough data to figure out where those little “splats” actually belong in 3D space.

- Watch out for the “shiny stuff” trap. Highly reflective surfaces like mirrors or polished chrome are a nightmare for current splatting tech, often resulting in weird, floating artifacts that ruin the immersion.

- Keep your camera settings consistent. If you’re jumping between different ISOs or aperture settings mid-capture, the software is going to struggle to reconcile the lighting differences, leading to a noisy, inconsistent reconstruction.

- Master the “loop.” When capturing an object, try to circle it completely and then come back around from different heights. The more angles you cover, the fewer “holes” or blurry patches you’ll have to fix in post-processing.

The Bottom Line: Why Gaussian Splatting Matters

It bridges the gap between “good enough” and photorealism, delivering stunningly detailed 3D scenes that traditional photogrammetry just can’t touch.

You get massive speed boosts in your workflow, moving from raw photo captures to interactive, high-fidelity environments in a fraction of the time.

It’s democratizing high-end spatial capture, making professional-grade 3D reconstruction accessible without needing a massive studio or a supercomputer.

## The Death of the Polygon

“We’re finally moving past the era of fighting with jagged meshes and baked-in lighting; Gaussian Splatting lets us stop ‘building’ digital worlds and start actually capturing them exactly as they exist in real life.”

Writer

The Future is Rendered

At the end of the day, Gaussian Splatting isn’t just another incremental update in the world of computer vision; it’s a fundamental shift in how we bridge the gap between the physical and digital realms. We’ve moved past the days of wrestling with cumbersome meshes and lighting artifacts that make 3D models look like plastic toys. By leveraging these volumetric primitives, we can now capture the soul of a scene—the subtle reflections, the soft shadows, and the complex textures—with a level of speed and fidelity that was practically science fiction just a few years ago. It’s about making 3D capture accessible, intuitive, and breathtakingly real.

As we look ahead, the implications for everything from filmmaking and gaming to virtual tourism are massive. We are standing on the edge of a new era where the barrier between “taking a photo” and “building a world” is rapidly dissolving. Don’t just watch this technology unfold from the sidelines; start experimenting, start capturing, and start seeing the world through a new lens. The tools are finally catching up to our imagination, and the only real limit now is how much of our reality we choose to reimagine.

Frequently Asked Questions

How much heavy-duty hardware do I actually need to run Gaussian Splatting without my computer melting?

Let’s get real: you don’t need a NASA supercomputer, but you can’t exactly do this on a budget Chromebook either. The heavy lifting happens on your GPU. To keep your fans from sounding like a jet engine, aim for an NVIDIA RTX card with at least 8GB of VRAM—12GB or more is the sweet spot if you want to play with high-res scenes. If you’re rocking a Mac, make sure it’s an M-series chip with plenty of unified memory.

Can I use these 3D captures in existing game engines like Unreal Engine or Unity right away?

The short answer? Not quite “plug-and-play” yet, but we’re getting incredibly close. You can’t just drag a raw Gaussian Splat file into Unity and expect it to work like a standard mesh. However, thanks to some brilliant community plugins and new experimental importers for Unreal Engine, you can definitely bring these scenes into your workflow. You’ll mostly be rendering them as specialized point clouds or using specific shaders to keep that magic realism intact.

How does the quality of my input photos affect the final "splat," and are there specific settings I should watch out for?

Think of your photos as the raw ingredients: if they’re blurry or poorly lit, your splat will look like a muddy mess. You need sharp, high-resolution shots with plenty of overlap—aim for about 70% to 80% between frames. Avoid heavy motion blur or weird reflections, as those trip up the math. Also, keep your aperture steady; inconsistent lighting across shots is the quickest way to ruin the spatial consistency.